Background and motivation. The Internet was born in 1969 when the first data transmission between two computers along the distance of 600 km was held. It was the first step into the data transmission world. Over the next decades, the internet, comprising the global Wide Area Network has been changed significantly. And distances between communication nodes have been correspondingly increased. Transmitting date reliably over the globe and even beyond using Internet Protocol lays now a critical base for manking being.

However, the two transport protocols – TCP and UDP still lay the basis for almost all internet communication. The principal difference between them is reliability. TCP is a reliable transport protocol mainly used in transaction-based communication like Web applications, file exchange and alike.UDP is a simple message-oriented transport layer protocol that provides datagrams, suitable for modelling other protocols.Both protocols were developed almost 40 years ago, when data was transmitted via local networks where:

- RTT was up to 20 ms;

- capacity was ones and tens of megabits per second.

For sure, the size of these data and required distances were extremely less than nowadays. Thus, the advantages of these protocols usage for networks of modern architecture is not always relevant.

Networks with large bandwidth-delay products are called long fat networks (LFNs), and a TCP connection operating on an LFN is called a long fat pipe (LFP). The communication industry faced main problems: how to transmit Big Data through LFP e.g. between continents, and how to avoid congestions in such connections in a more effective manner. The concept of the TCP protocol cannot fill fully all needs in data transmissions of modern society.

RMDT protocol. In the FILA team, we go new ways for data transmission, which optimizes the usage level of the network infrastructure. We propose a set of novel approaches and algorithm of congestion control that are highly sophisticated. One f the is a novel approach to optimizing Application Layer Multicast (ALM) using the Non-dominated Sorting Genetic Algorithm II (NSGA-II) for multiple network metrics, including roundtrip time (RTT), available bandwidth, and other relevant parameters. To achieve this, we have incorporated the advanced capabilities of the RMDT protocol for efficient data transfer. Our proposed method focuses on optimizing the multicast tree structure to provide a more robust and efficient data dissemination across the network. By employing NSGA-II, we simultaneously optimize multiple objectives, ensuring a well-rounded and adaptable solution for various network conditions. This is a point-to-multipoint data transport protocol, which provides reliable multi-gigabit Big Data distribution across the world. It works in any IP-based network and can handle high packet losses as well as high RTTs and jitter.

Unique RMDT protocol features include:

- Multiple recipients within a single session

- Reliability on the full rate over WAN

- End-to-end 10G communication (1×10)

- Efficient system resources consumption

- Legacy IP infrastructure

- Suitable for streaming

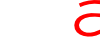

RMDT protocol can be used in WAN networks in order to assure maximum bandwidth utilization. It is resistant against high losses, RTT, and delay jitter. Our research demonstrated that it is capable to provide up to 950 Mbps per receiver in presence of 2 % losses and 300 ms RTT. Due to the use of the patent-pending ABC algorithms, the protocol is able to deal with very aggressive cross-traffic with high efficient rate adaptation, which moves RMDT to the next level of intelligence in comparison with competitors. Under the same conditions competitor’s multi-destination protocols perform more than 5-20 times slower in comparison to RMDT.

The following key features enable RMDT to provide data transport with efficient resource usage:

- Low delay transmission issues: With high-speed data transmission, there is an issue of providing low delays as processing retransmissions on 10s of GBs rates can be difficult. However, there are use cases for low delay high-speed data transmissions that need efficient solutions. Since applying such bulk transfer for sequential byte stream delivery is inappropriate due to the fact, that retransmissions of lost data are performed with huge delays. Therefore, bulk data transfer is not a suitable technique for file transport scenarios that assume very low transport latency for particular data segments. RMDT is based on a simple stream that effectively copes with the problem of packet losses and reaches multi-gigabit rates in a live stream transmission due to the inherently low delay of all the data segments of a data block. This is achieved by implementing fast memory management and retransmission subsystem.

- Congestion and flow control: Bottleneck queue level (BQL) is a delay-based congestion control algorithm designed to utilize full available bottleneck bandwidth while keeping bottleneck buffer queue load on a low level for fast data transmission. Packet loss tolerance and RMDT features allow BQL to provide significantly higher data transmission performance in comparison with traditional solutions.

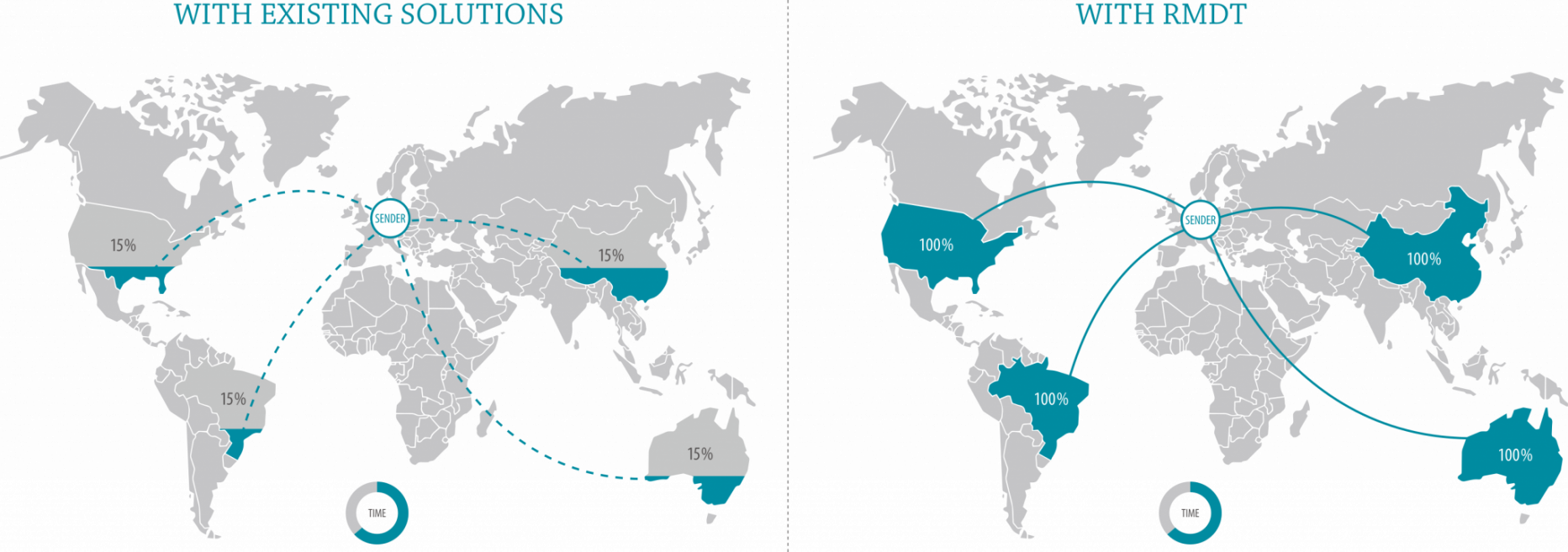

- Multi-point: Data exchange between multiple locations simultaneously increases overall throughput and reduces memory usage. From the network’s point of view, an RMDT multi-point session is merely a set of unicast streams, tied to a single transport session, thus avoiding IP multicast and the issues associated with it. RMDT’s native support of the multi-destination mode ensures stream transmissions to multiple locations with the highest possible speed.

- High-precision time-related functions: it has been developed a high performance timer library (HighPerTimer) which shows twice as high precision in comparison to standard Linux OS timers and modified sleep function which has about a thousand times fewer wake-up errors than standard C library sleep functions.

Project site: BitBooster.

Project manager – DmitryKachan, Kiril Karpov.